OF-VO: Reliable Navigation among Pedestrians Using Commodity Sensors

Jing Liang, Yi-Ling Qiao, Tianrui Guan, Dinesh Manocha

Published in IEEE Robotics and Automation Letters, 2021

Abstract

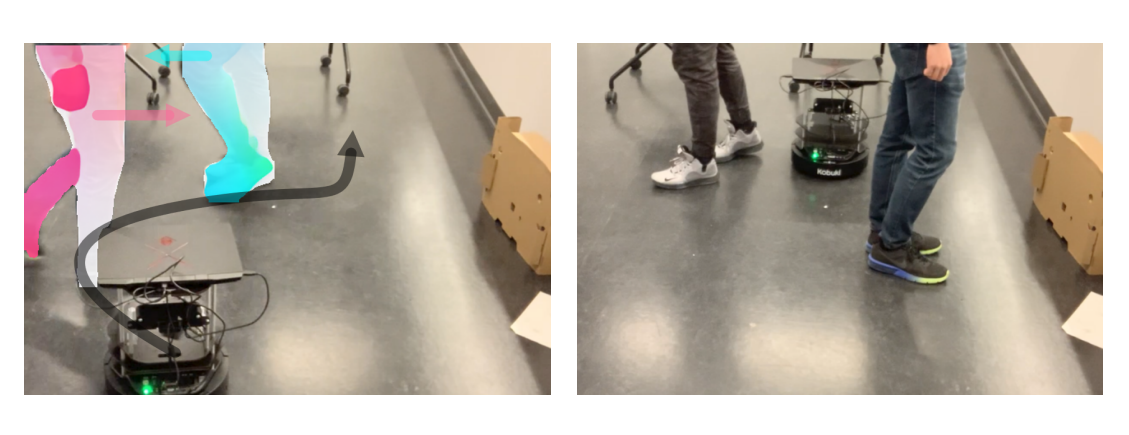

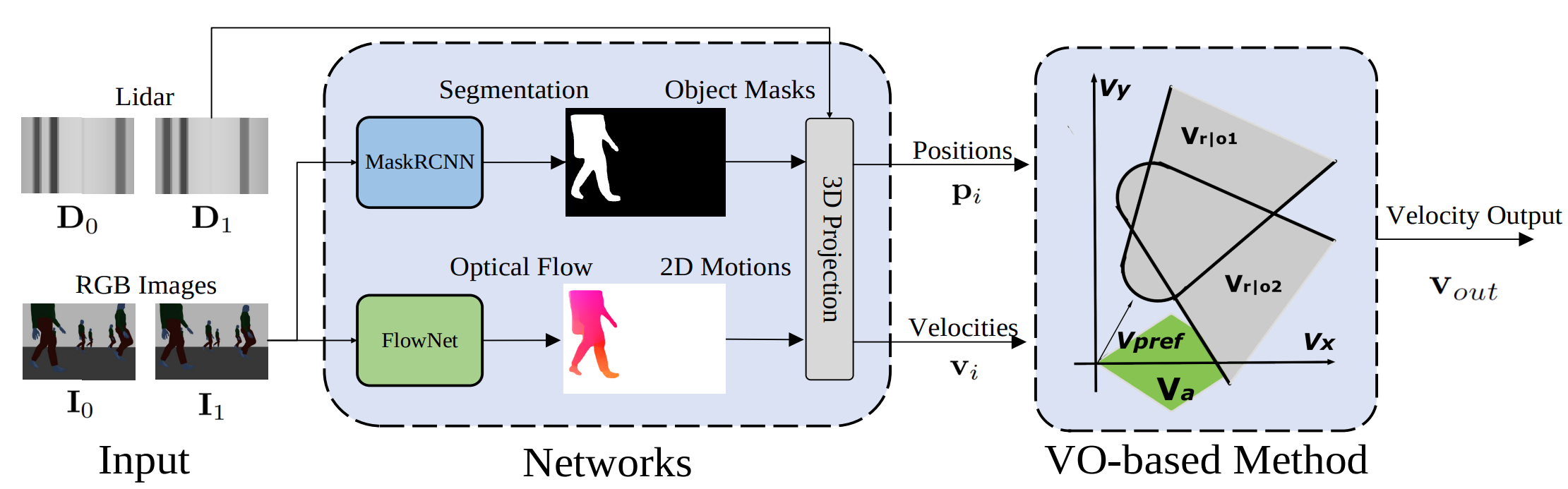

We present a modified velocity-obstacle (VO) algorithm that uses probabilistic partial observations of the environment to compute velocities and navigate a robot to a target. Our system uses commodity visual sensors, including a mono-camera and a 2D Lidar, to explicitly predict the velocities and positions of surrounding obstacles through optical flow estimation, object detection, and sensor fusion. A key aspect of our work is coupling the perception (OF: optical flow) and planning (VO) components for reliable navigation. Overall, our OF-VO algorithm using learning-based perception and model-based planning methods offers better performance than prior algorithms in terms of navigation time and success rate of collision avoidance. Our method also provides bounds on the probabilistic collision avoidance algorithm. We highlight the realtime performance of OF-VO on a Turtlebot navigating among pedestrians in both simulated and real-world scenes.

Video

The paper is available here. Please cite our work if you found it useful,

@ARTICLE{9460817,

author={Liang, Jing and Qiao, Yi-Ling and Guan, Tianrui and Manocha, Dinesh},

journal={IEEE Robotics and Automation Letters},

title={OF-VO: Efficient Navigation Among Pedestrians Using Commodity Sensors},

year={2021},

volume={6},

number={4},

pages={6148-6155},

doi={10.1109/LRA.2021.3090660}}